The SnowPro Advanced: Architect Certification Exam (ARA-C01) is designed for experienced Snowflake professionals who architect and optimize enterprise data platforms. This exam validates your ability to design secure, scalable, and performant Snowflake solutions across complex organizational environments. Passing ARA-C01 demonstrates mastery of advanced Snowflake concepts and positions you as a trusted architect within the SnowPro Certification path. This page provides a clear roadmap of exam topics, question formats, and practical preparation strategies to help you succeed.

Use this topic map to guide your study for Snowflake ARA-C01 (SnowPro Advanced: Architect Certification Exam) within the SnowPro Certification path.

The ARA-C01 exam uses multiple question types to assess both conceptual knowledge and applied decision-making in real-world Snowflake scenarios. Questions progress in difficulty and require you to think beyond definitions into architectural trade-offs and optimization choices.

Questions reward practical reasoning and the ability to weigh trade-offs between security, performance, cost, and scalability.

Effective preparation for ARA-C01 requires systematic study of each domain paired with hands-on practice. Allocate time proportionally to domain weight, and regularly test yourself to identify gaps before exam day. Link concepts across domains, for example, understand how security policies affect performance, or how data engineering choices influence warehouse design.

Explore other Snowflake certifications: view all Snowflake exams.

Strengthen your preparation with up‑to‑date resources from validexamdumps.com. These materials align to ARA-C01 and cover practical scenarios with clear explanations.

Visit the exam page to download the PDF, Online Practice Test, or get a bundle discount for both formats: SnowPro Advanced: Architect Certification Exam.

Snowflake Architecture and Performance Optimization typically account for a larger portion of the exam, reflecting the architect's primary responsibility to design efficient, scalable systems. However, all four domains are tested, and security questions often involve nuanced scenarios that require careful analysis. Allocate study time based on domain weight, but ensure you have solid foundational knowledge across all areas.

In practice, these domains overlap significantly. For example, your account and security design (Domain 1.0) determines which users can access which warehouses (Domain 2.0), which in turn affects data pipeline permissions (Domain 3.0) and query performance visibility (Domain 4.0). Understanding these connections helps you answer scenario-based questions that test integrated thinking rather than isolated facts.

Snowflake recommends at least 18-24 months of experience with Snowflake in production environments, including exposure to account administration, warehouse configuration, and query optimization. Hands-on labs focusing on multi-cluster warehouses, query profiling, and role-based access control will strengthen your ability to answer practical questions. If you lack production experience, prioritize lab exercises that simulate real deployment scenarios.

Candidates often overlook the trade-offs between cost and performance, choosing the fastest solution without considering resource monitor limits or budget constraints. Another common error is misunderstanding how clustering and partitioning interact with query pruning. Finally, many rush through scenario questions without fully reading all answer options; the correct answer often depends on a subtle detail like "in a multi-region setup" or "with minimal latency requirements."

In your final week, focus on weak areas identified during practice tests rather than re-reading entire topics. Do one full-length timed mock under exam conditions, then spend 2-3 hours reviewing explanations for questions you missed or guessed on. Avoid cramming new material; instead, reinforce connections between domains and practice pacing so you finish with time to review flagged questions.

The data share exists between a data provider account and a data consumer account. Five tables from the provider account are being shared with the consumer account. The consumer role has been granted the imported privileges privilege.

What will happen to the consumer account if a new table (table_6) is added to the provider schema?

When a new table (table_6) is added to a schema in the provider's account that is part of a data share, the consumer will not automatically see the new table. The consumer will only be able to access the new table once the appropriate privileges are granted by the provider. The correct process, as outlined in option D, involves using the provider's ACCOUNTADMIN role to grant USAGE privileges on the database and schema, followed by SELECT privileges on the new table, specifically to the share that includes the consumer's database. This ensures that the consumer account can access the new table under the established data sharing setup. Reference:

Snowflake Documentation on Managing Access Control

Snowflake Documentation on Data Sharing

The diagram shows the process flow for Snowpipe auto-ingest with Amazon Simple Notification Service (SNS) with the following steps:

Step 1: Data files are loaded in a stage.

Step 2: An Amazon S3 event notification, published by SNS, informs Snowpipe --- by way of Amazon Simple Queue Service (SQS) - that files are ready to load. Snowpipe copies the files into a queue.

Step 3: A Snowflake-provided virtual warehouse loads data from the queued files into the target table based on parameters defined in the specified pipe.

If an AWS Administrator accidentally deletes the SQS subscription to the SNS topic in Step 2, what will happen to the pipe that references the topic to receive event messages from Amazon S3?

If an AWS Administrator accidentally deletes the SQS subscription to the SNS topic in Step 2, the pipe that references the topic to receive event messages from Amazon S3 will no longer be able to receive the messages. This is because the SQS subscription is the link between the SNS topic and the Snowpipe notification channel. Without the subscription, the SNS topic will not be able to send notifications to the Snowpipe queue, and the pipe will not be triggered to load the new files. To restore the system immediately, the user needs to manually create a new SNS topic with a different name and then recreate the pipe by specifying the new SNS topic name in the pipe definition. This will create a new notification channel and a new SQS subscription for the pipe. Alternatively, the user can also recreate the SQS subscription to the existing SNS topic and then alter the pipe to use the same SNS topic name in the pipe definition. This will also restore the notification channel and the pipe functionality.Reference:

Automating Snowpipe for Amazon S3

Enabling Snowpipe Error Notifications for Amazon SNS

HowTo: Configuration steps for Snowpipe Auto-Ingest with AWS S3 Stages

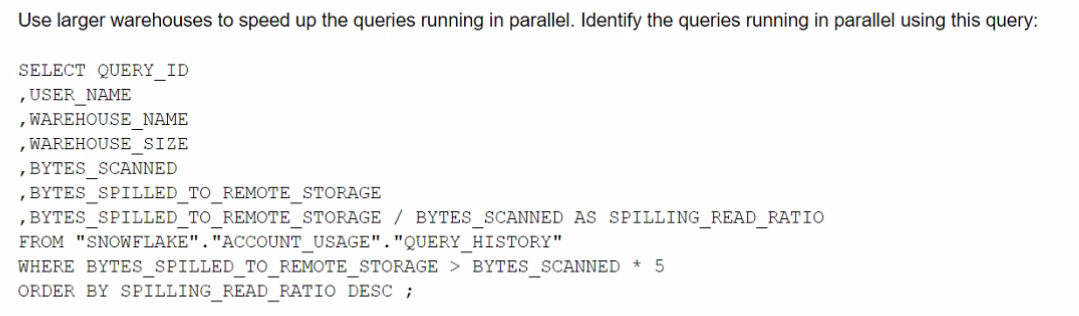

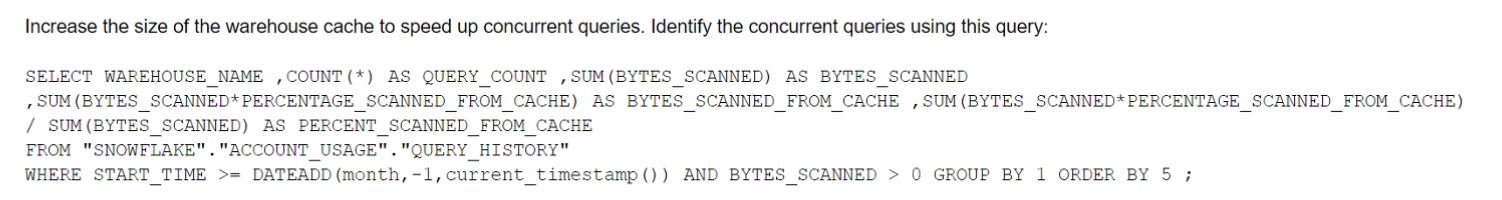

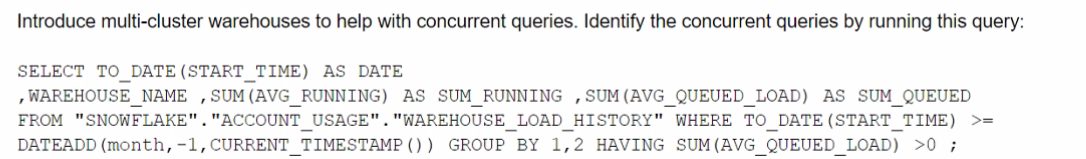

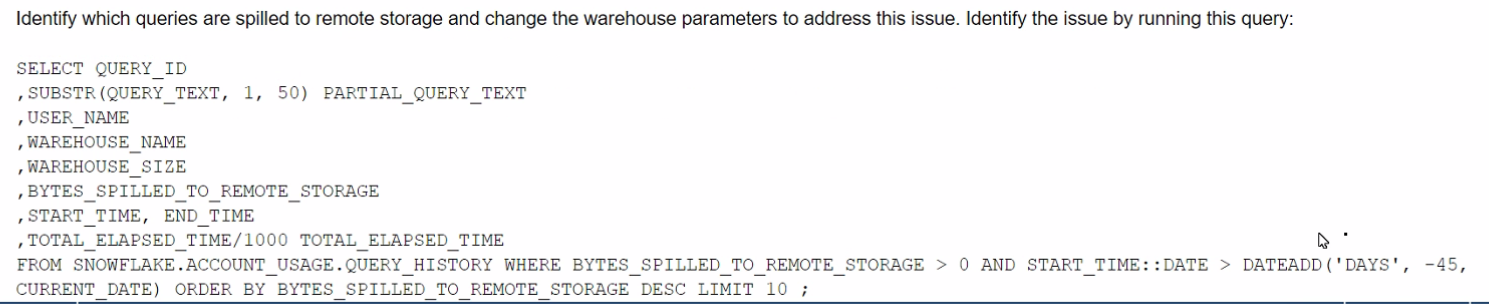

The Business Intelligence team reports that when some team members run queries for their dashboards in parallel with others, the query response time is getting significantly slower What can a Snowflake Architect do to identify what is occurring and troubleshoot this issue?

A)

B)

C)

D)

What Snowflake features should be leveraged when modeling using Data Vault?

These two features are relevant for modeling using Data Vault on Snowflake. Data Vault is a data modeling approach that organizes data into hubs, links, and satellites. Data Vault is designed to enable high scalability, flexibility, and performance for data integration and analytics. Snowflake is a cloud data platform that supports various data modeling techniques, including Data Vault. Snowflake provides some features that can enhance the Data Vault modeling, such as:

Snowflake Documentation: Multi-table Inserts

Snowflake Blog: Tips for Optimizing the Data Vault Architecture on Snowflake

Snowflake Documentation: Virtual Warehouses

Snowflake Blog: Building a Real-Time Data Vault in Snowflake